Merlin Systems requires Triton Inference Server and Tensorflow.

from_feature_view ( store = feature_store, view = "movie_features", column = "filtered_ids", ) # Join user and item features for the candidates and use them to predict relevance scores combined_features = item_features > UnrollFeatures ( "movie_id", user_features, unrolled_prefix = "user" ) ranking = combined_features > PredictTensorflow ( ranking_model_path ) # Sort candidate items by relevance score with some randomized exploration ordering = combined_features > SoftmaxSampling ( relevance_col = ranking, topk = 10, temperature = 20.0 ) # Create and export the ensemble ensemble = Ensemble ( ordering, request_schema ) ensemble. from_feature_view ( store = feature_store, view = "user_features", column = "user_id" ) retrieval = ( user_features > PredictTensorflow ( retrieval_model_path ) > QueryFaiss ( faiss_index_path, topk = 100 ) ) # Filter out candidate items that have already interacted with # in the current session and fetch item features for the rest filtering = retrieval > FilterCandidates ( filter_out = user_features ) item_features = filtering > QueryFeast. FeatureStore ( feast_repo_path ) # Define the fields expected in requests request_schema = Schema () # Fetch user features, use them to a compute user vector with retrieval model, # and find candidate items closest to the user vector with nearest neighbor search user_features = request_schema. load_model ( ranking_model_path ) feature_store = feast. load_model ( retrieval_model_path ) ranking_model = tf. Merlin Systems can also build more complex serving pipelines that integrate multiple models and external tools (like feature stores and nearest neighbor search): # Load artifacts for the pipeline retrieval_model = tf. Building a Four-Stage Recommender Pipeline The notebook shows how to deploy the ensemble and demonstrates sending requests to Triton Inference Server. Refer to the Merlin Systems Example Notebooks for a notebook that serves a ranking models ensemble. tritonserver -model-repository =/export_path/ export ( export_path )Īfter you export your ensemble, you reference the directory to run an instance of Triton Inference Server to host your ensemble. column_names > TransformWorkflow ( workflow ) > PredictTensorflow ( model ) ) # Export artifacts to disk ensemble = Ensemble ( pipeline, workflow. remove_inputs () # Define ensemble pipeline pipeline = ( workflow. load_model ( tf_model_path ) # Remove target/label columns from feature processing workflowk workflow = workflow. load ( nvtabular_workflow_path ) model = tf.

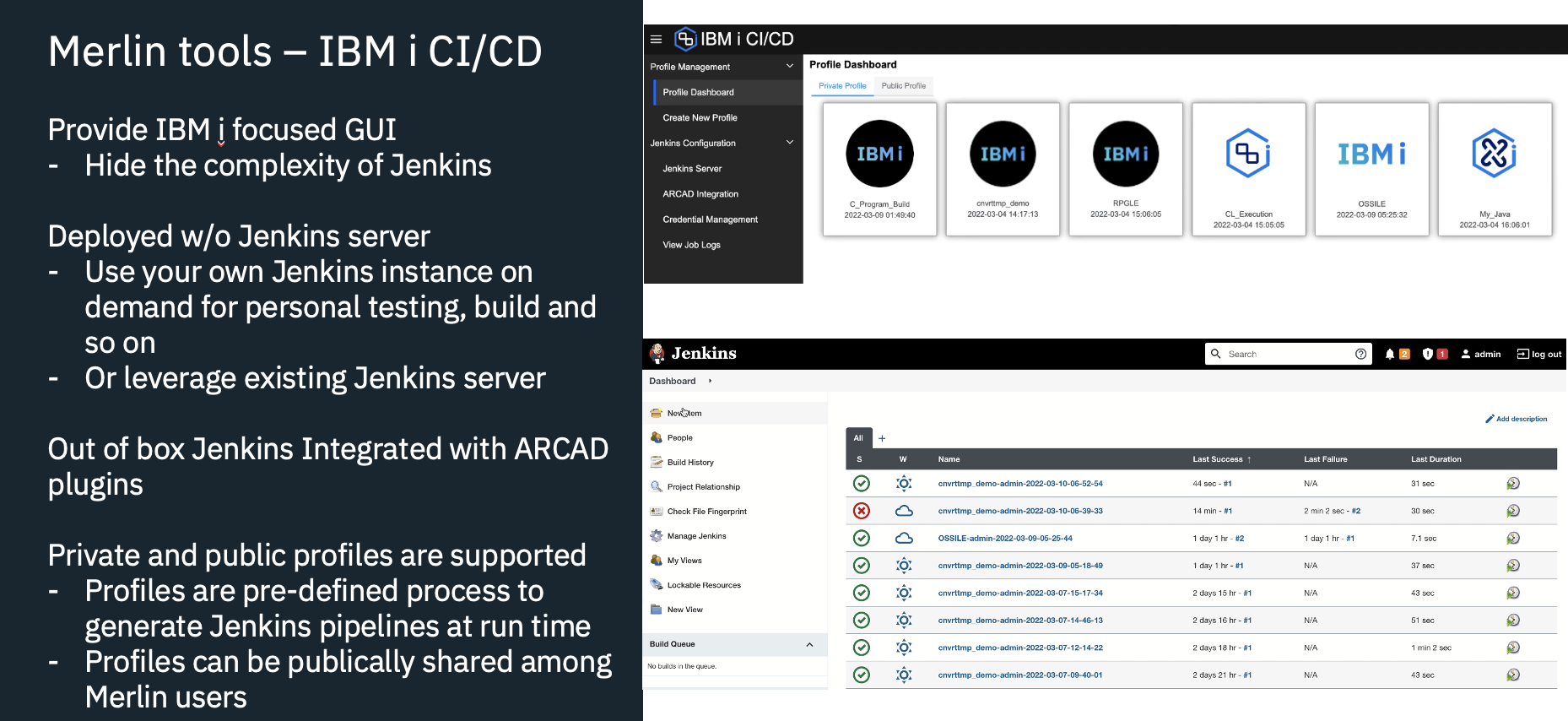

To combine a feature engineering workflow and a Tensorflow model into an inference pipeline: import tensorflow as tf from nvtabular.workflow import Workflow from import Ensemble, PredictTensorflow, TransformWorkflow # Load saved NVTabular workflow and TensorFlow model workflow = Workflow. Merlin Systems uses the Merlin Operator DAG API, the same API used in NVTabular for feature engineering, to create serving ensembles. Merlin Systems provides tools for combining recommendation models with other elements of production recommender systems like feature stores, nearest neighbor search, and exploration strategies into end-to-end recommendation pipelines that can be served with Triton Inference Server.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed